Saliency maps for CNN¶

Gives some intuition of attention

First Introduced in 2013

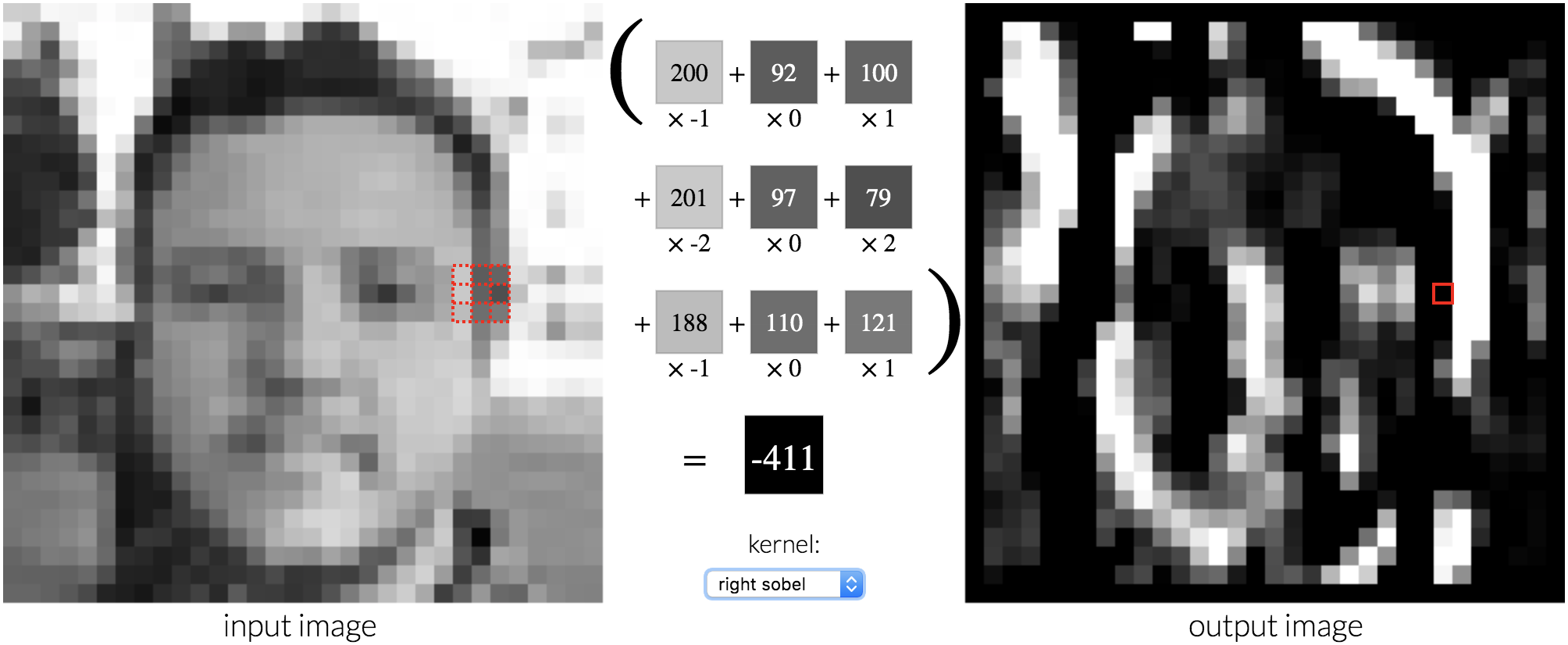

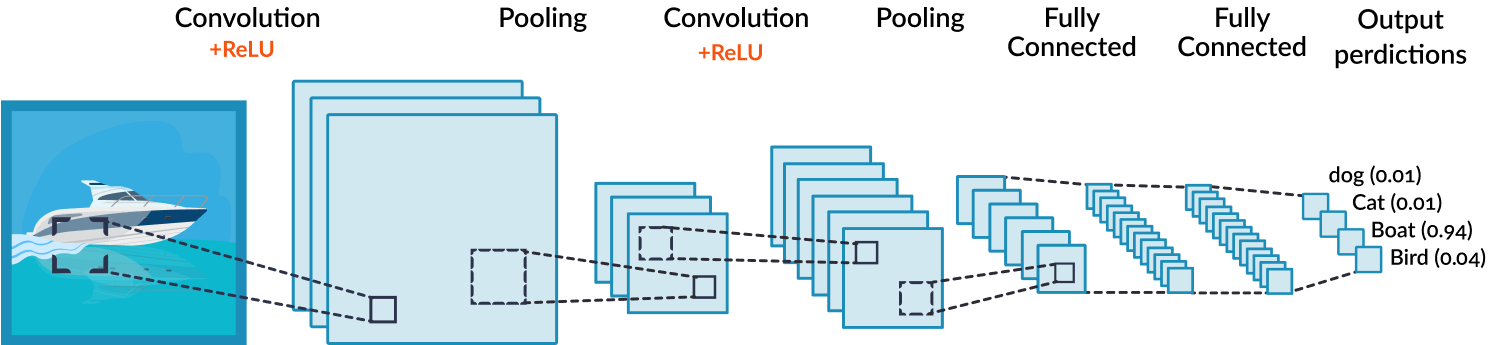

Calculates the relationship (or gradients) between output label & input image pixels

Positive gradients == positive effects on the confidence of the label

Introducing FlashTorch¶

Open source feature visualization toolkit

Supports torchvision models & custom PyTorch models

Available to install via pip

$ pip install flashtorch

Prepare an input 🐦¶

from flashtorch.utils import load_image, apply_transforms, denormalize, format_for_plotting

image = load_image('../../examples/images/great_grey_owl.jpg')

owl = apply_transforms(image)

print(f'Before: {type(image)}')

print(f'After: {type(owl)}, {owl.shape}')

plt.imshow(format_for_plotting(denormalize(owl)))

plt.title('Input tensor')

plt.axis('off');

Before: <class 'PIL.Image.Image'> After: <class 'torch.Tensor'>, torch.Size([1, 3, 224, 224])

Create a Backprop object¶

from flashtorch.saliency import Backprop

model = models.alexnet(pretrained=True)

backprop = Backprop(model)

Visualize saliency maps¶

from flashtorch.utils import ImageNetIndex

imagenet = ImageNetIndex()

target_class = imagenet['great grey owl']

backprop.visualize(owl, target_class, guided=True)

What about other birds?

What makes peacock a peacock?¶

peacock = apply_transforms(load_image('../../examples/images/peacock.jpg'))

target_class = imagenet['peacock']

backprop.visualize(peacock, target_class, guided=True)

... or a toucan?¶

toucan = apply_transforms(load_image('../../examples/images/toucan.jpg'))

target_class = imagenet['toucan']

backprop.visualize(toucan, target_class, guided=True)

What about when it gets things wrong?

Is FlashTorch still useful?

Building a flower classifier¶

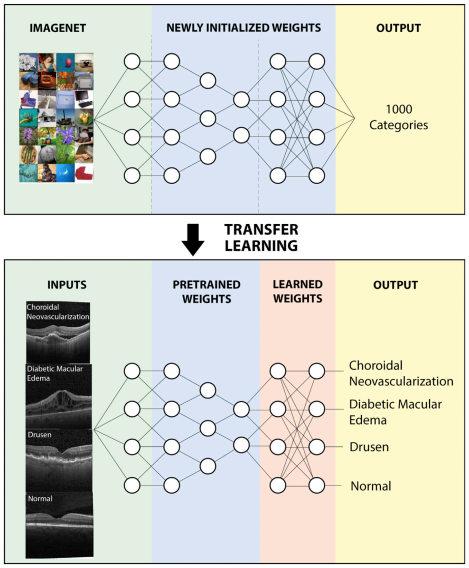

- Get a model, pre-trained with the ImageNet dataset (1000 classes)

- Swap out the last layer (102 classes) --> Un-tuned

- Train with a flower dataset --> Tuned

Un-tuned model: 0.1% accuracy on the test dataset 😅

Why is it so bad? Let's take a look.

plt.imshow(load_image('images/foxgloves.jpg'))

plt.title('Foxgloves')

plt.axis('off');

backprop = Backprop(untuned_model)

backprop.visualize(foxglove, class_index, guided=True)

/Users/misao/Projects/personal/flashtorch/flashtorch/saliency/backprop.py:111: UserWarning: The predicted class index 98 does notequal the target class index 96. Calculatingthe gradient w.r.t. the predicted class. 'the gradient w.r.t. the predicted class.'

After training, the tuned model achieved >98% test accuracy 🎉

But how? What is it seeing now? 🤔

backprop = Backprop(tuned_model)

backprop.visualize(foxglove, class_index, guided=True)

Show, don't tell - visualization is a powerful tool.

Model explainability¶

With feature visualization, we're better equipped to:

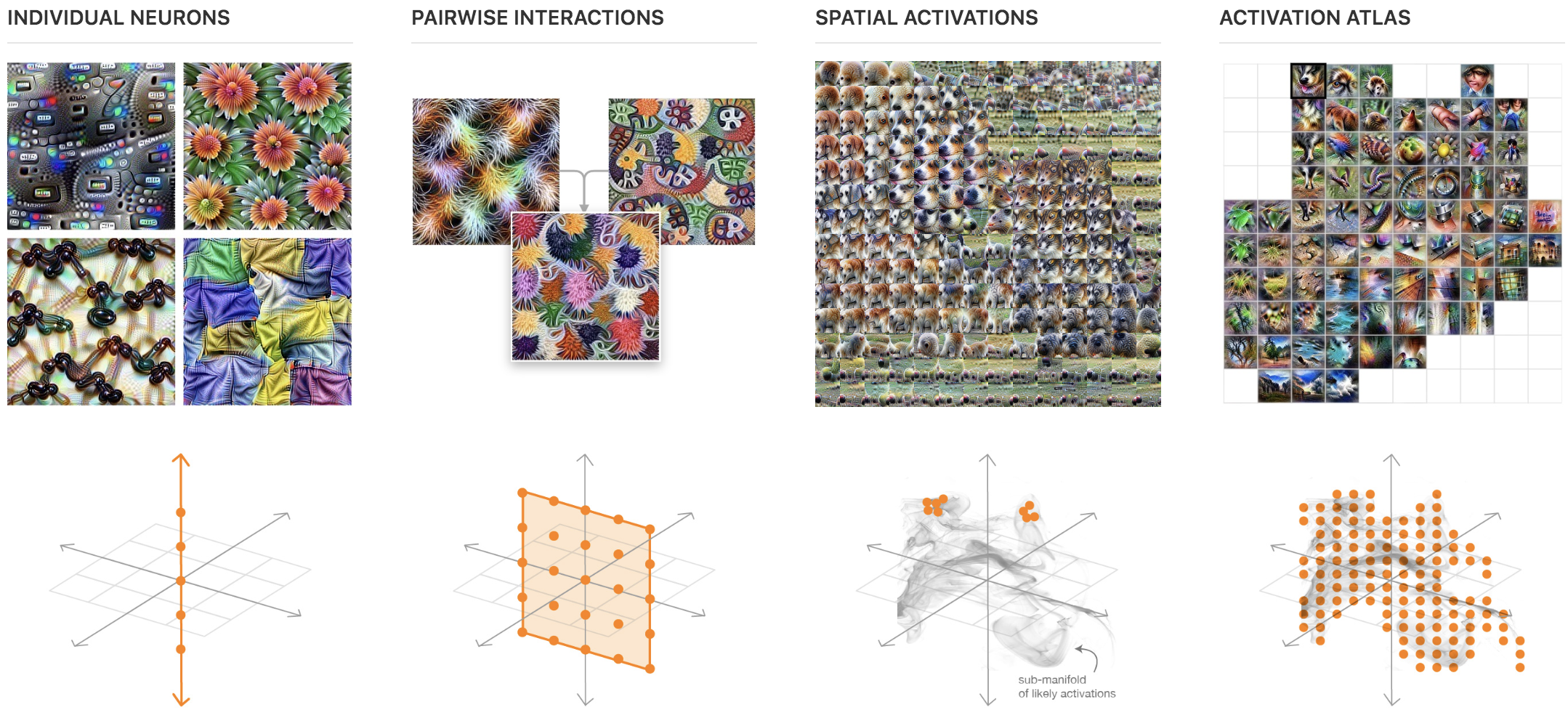

Focus on the mechanisms of how & what neural nets learn

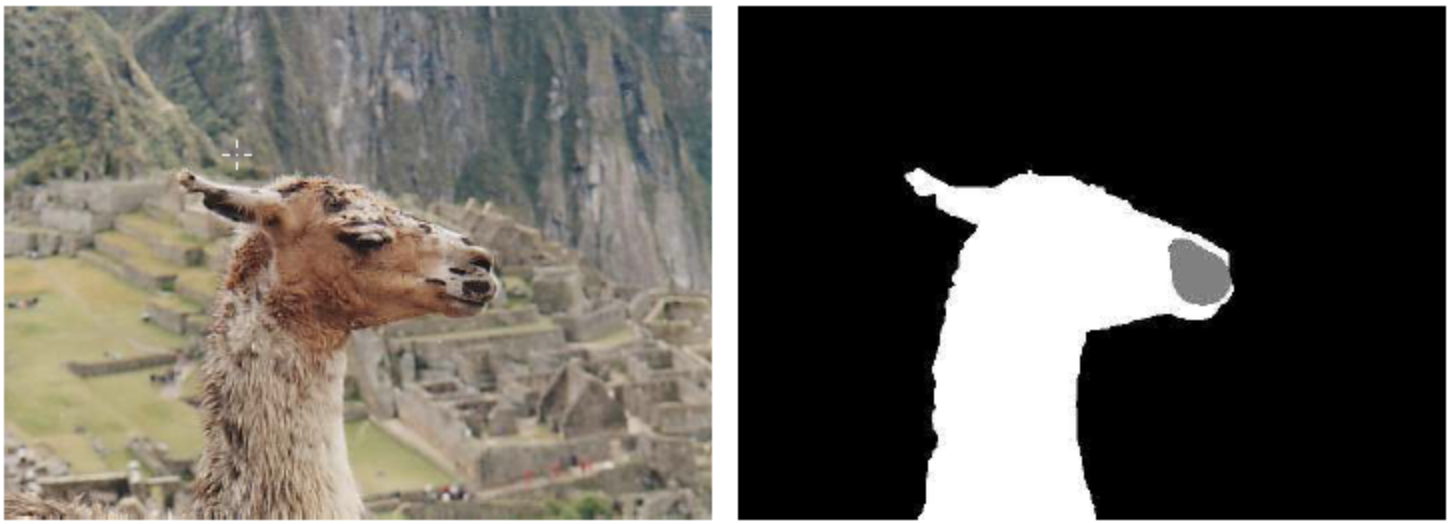

Diagnose what and why the network gets things wrong

Spot and correct biases in algorithms

A step forward towards understanding & trusting AI 😌

Thank you 🙏¶

Checkout FlashTorch on GitHub

Suggestions & contributions welcome!

📡 Slide deck @ tinyurl.com/flashtorch-ldn-meetup